Hadoop Distributed File System

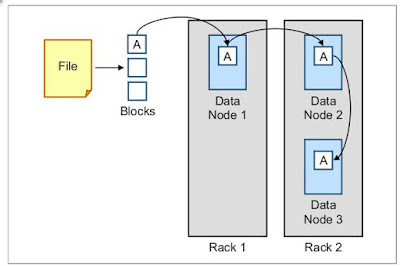

Hadoop Distributed File System (HDFS) is the file system that is used to store the data in Hadoop. How it stores data is special. When a file is saved in HDFS, it is first broken down into blocks with any remainder data that is occupying the final block. The size of the block depends on the way that HDFS is configured. At the time of writing, the default block size for Hadoop is 64 megabytes (MB). To improve performance for larger files, Hadoop changes this setting at the time of installation to 128 MB per block. Then, each block is sent to a different data node and written to the hard disk drive (HDD). When the data node writes the file to disk, it then sends the data to a second data node where the file is written. When this process completes, the second data node sends the data to a third data node. The third node confirms the completion of the writeback to the second, then back to the first. The NameNode is then notified and the block write is complete. After all blocks are w...